A Reality Check for Aspiring Data Scientists Targeting Machine Learning Jobs.

If you’re preparing for machine learning jobs, here’s a scenario that separates average candidates from serious data professionals:

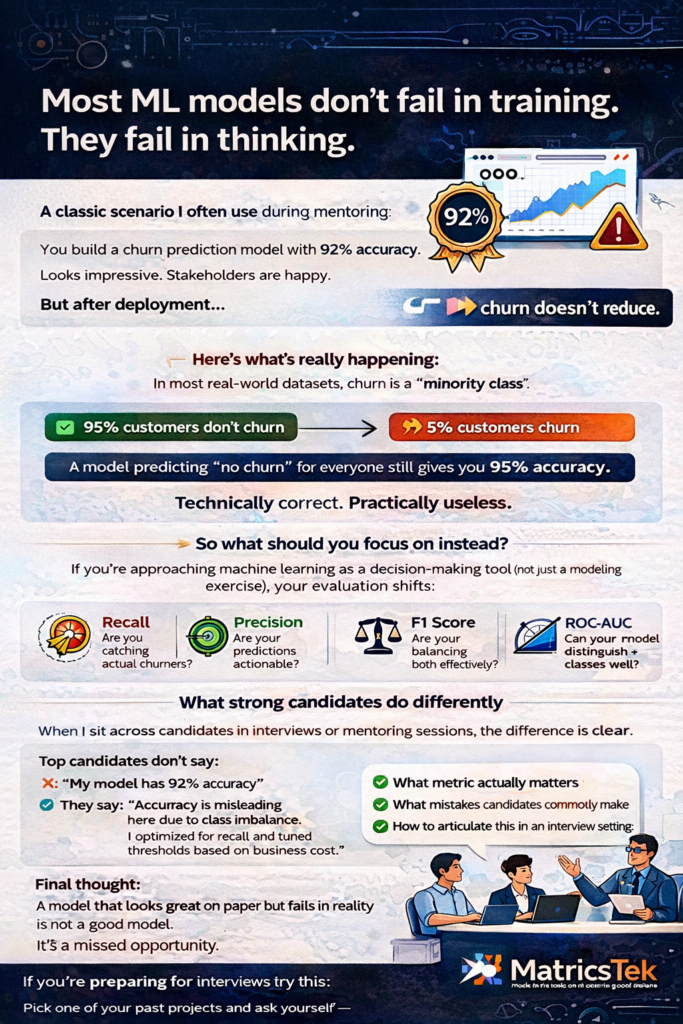

You built a churn prediction model with 92% accuracy.

Leadership is impressed.

Dashboards look great.

But… churn hasn’t reduced.

Welcome to one of the most misunderstood traps in machine learning.

The Illusion of Accuracy

Most candidates proudly present accuracy as their primary metric.

But here’s the uncomfortable truth:

Accuracy can be dangerously misleading—especially in imbalanced datasets.

Example:

• 95% customers → NOT churning

• 5% customers → churning

If your model predicts “no churn” for everyone, you still get 95% accuracy.

But from a business perspective?

👉 Your model is completely useless.

What Businesses Actually Care About

Companies hiring for data science roles are not impressed by high accuracy.

They care about:

• Can you identify actual churners?

• Can your model help reduce revenue loss?

• Can it drive targeted retention campaigns?

This is where evaluation metrics become strategic, not just technical.

Metrics That Actually Matter

If you’re serious about cracking machine learning interviews, you need to shift your thinking:

- Precision

Out of all predicted churners, how many are actually churning?

➡️ Important when retention campaigns are expensive.

- Recall (Sensitivity)

Out of all actual churners, how many did you correctly identify?

➡️ Critical when missing a churner is costly.

- F1 Score

Balance between Precision and Recall.

➡️ Ideal when you need a trade-off.

- ROC-AUC

Measures model’s ability to distinguish between classes.

➡️ Preferred in many real-world ML systems.

The Root Cause: Class Imbalance

Most real-world datasets (fraud, churn, disease detection) are imbalanced.

Yet, many candidates trained through generic courses miss this entirely.

This is exactly where industry-relevant Data Science Training from us, at MatricsTek makes the difference.

How a Strong Candidate Fixes This Problem

Here’s what a top 10% candidate would say in an interview:

✅ 1. Reframe the Problem

“Accuracy is not the right metric here due to class imbalance. I would focus on Recall and F1-score.”

✅2. Handle Imbalance

• SMOTE (Synthetic Minority Oversampling)

• Undersampling majority class

• Class weights in models

✅ 3. Use Business-Aligned Metrics

• Customer Lifetime Value (CLV)

• Cost of false negatives vs false positives

• Retention uplift

✅ 4. Threshold Tuning

Instead of default 0.5 probability cutoff:

• Optimize threshold based on business impact

✅ 5. Build Actionable Output

Not just predictions, but:

• “Top 10% high-risk customers”

• Prioritized intervention list

💡 What Most Candidates Get Wrong

During job search for data roles, we consistently see candidates:

❌ Over-focus on accuracy

❌ Ignore business context

❌ Treat ML as a coding problem, not a decision-making tool

❌ Fail to connect model output to real-world action

What Hiring Managers Are Actually Testing

When they ask this question, they’re evaluating:

• Your understanding of real-world ML challenges

• Your ability to think beyond algorithms

• Your awareness of business impact

• Your maturity as a decision scientist, not just a model builder

How MatricsTek Positions You Differently

At MatricsTek Inc, we don’t just prepare you for interviews.

We prepare you for real machine learning jobs in USA.

Our approach to Data Science Training in USA-aligned curriculum focuses on:

• Scenario-based learning (like this one)

• Business-first thinking

• Hands-on case studies from real industries

• Interview-ready storytelling

Final Thought

A model that looks good on paper but fails in reality is not a model. It’s a mistake.

If you’re targeting data science and machine learning jobs in USA, remember:

👉 Companies don’t hire you for your accuracy score.

👉 They hire you for the decisions your model enables.